Why AI Features Need Architecture, Not Just Models

Architecture patterns and design decisions for building reliable LLM-powered features in modern frontend systems

AI features can appear as a chat assistant beside a product or as part of the system itself. The difference is not the model. It’s the architecture around it.

What I’m noticing in real AI products

I haven’t built a lot of AI features yet.

But over the past months, I’ve been paying close attention to how AI features appear in real products.

Sometimes they show up as a small assistant sitting beside the product. A chat box you can ask questions or generate something from.

Other times, the AI lives directly into the workflow. It understands the artifact you’re working on, the context around it, and the actions you can take next.

At first glance these approaches look similar.

Under the surface, they are very different systems.

That difference becomes much clearer once you look at how these systems are designed.

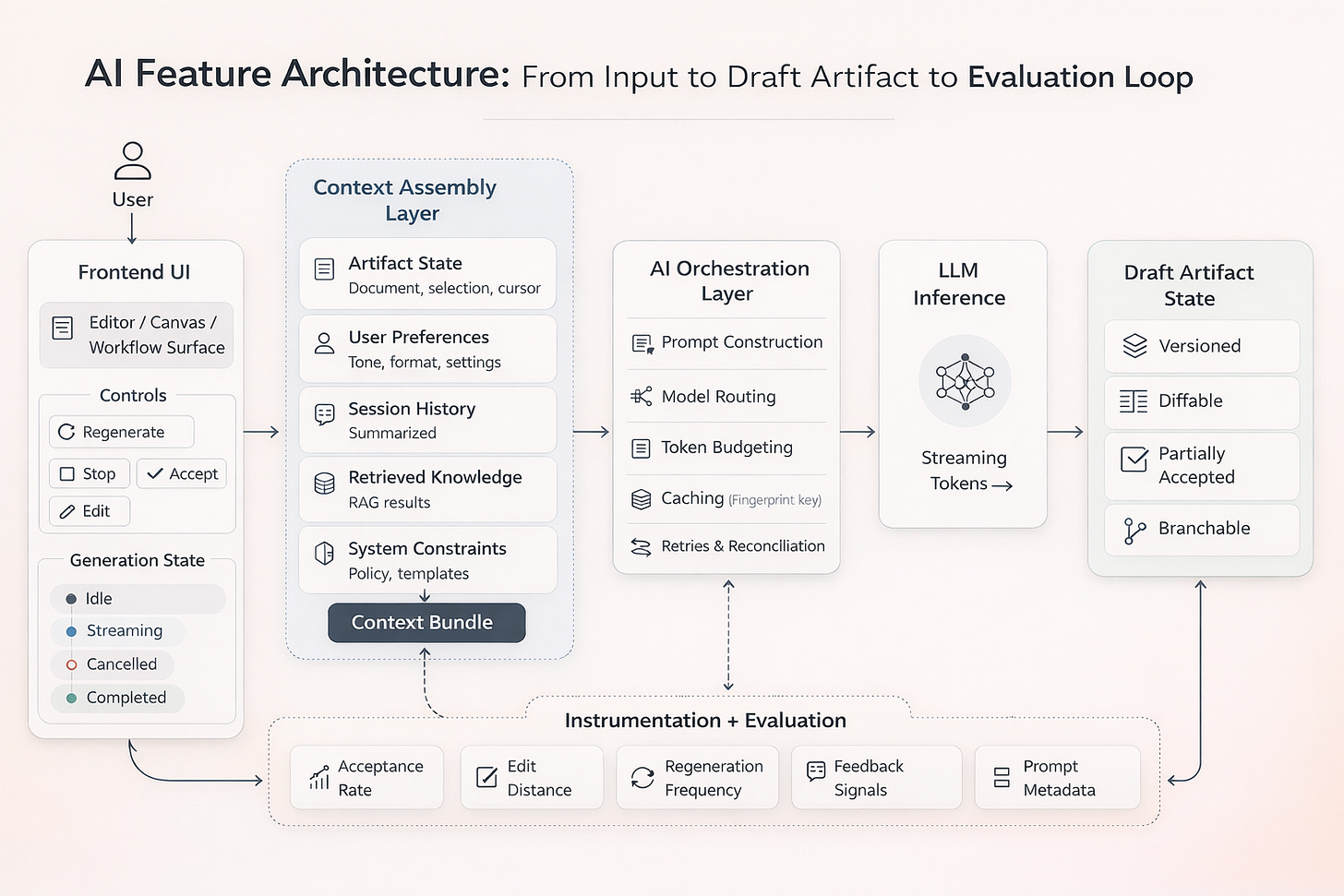

Context construction is infrastructure

The strongest systems do not simply “call AI.” They construct context explicitly.

In weaker implementations, the API boundary is obvious. Input goes out. A block of text comes back. It is rendered with little awareness of product state.

In more mature systems, generation reflects layered state.

Where context comes from (state layers)

Context is typically assembled from multiple sources:

Current artifact state

User profile and preferences

Session history

Retrieved documents or indexed knowledge

System-level instructions and constraints

This implies an architectural layer responsible for context building, not just ad-hoc string concatenation.

It also forces you to define ownership boundaries clearly: what lives in client state, what is assembled on the server, and what becomes persistent application memory.

Pruning, summarization, and ownership boundaries

As usage grows, context grows too.

Stronger systems make explicit decisions about:

When to summarize interaction history

What to persist long term versus keep session-scoped

Whether context assembly happens client-side, server-side, or in a hybrid model

When these boundaries are undefined, instability and cost creep follow.

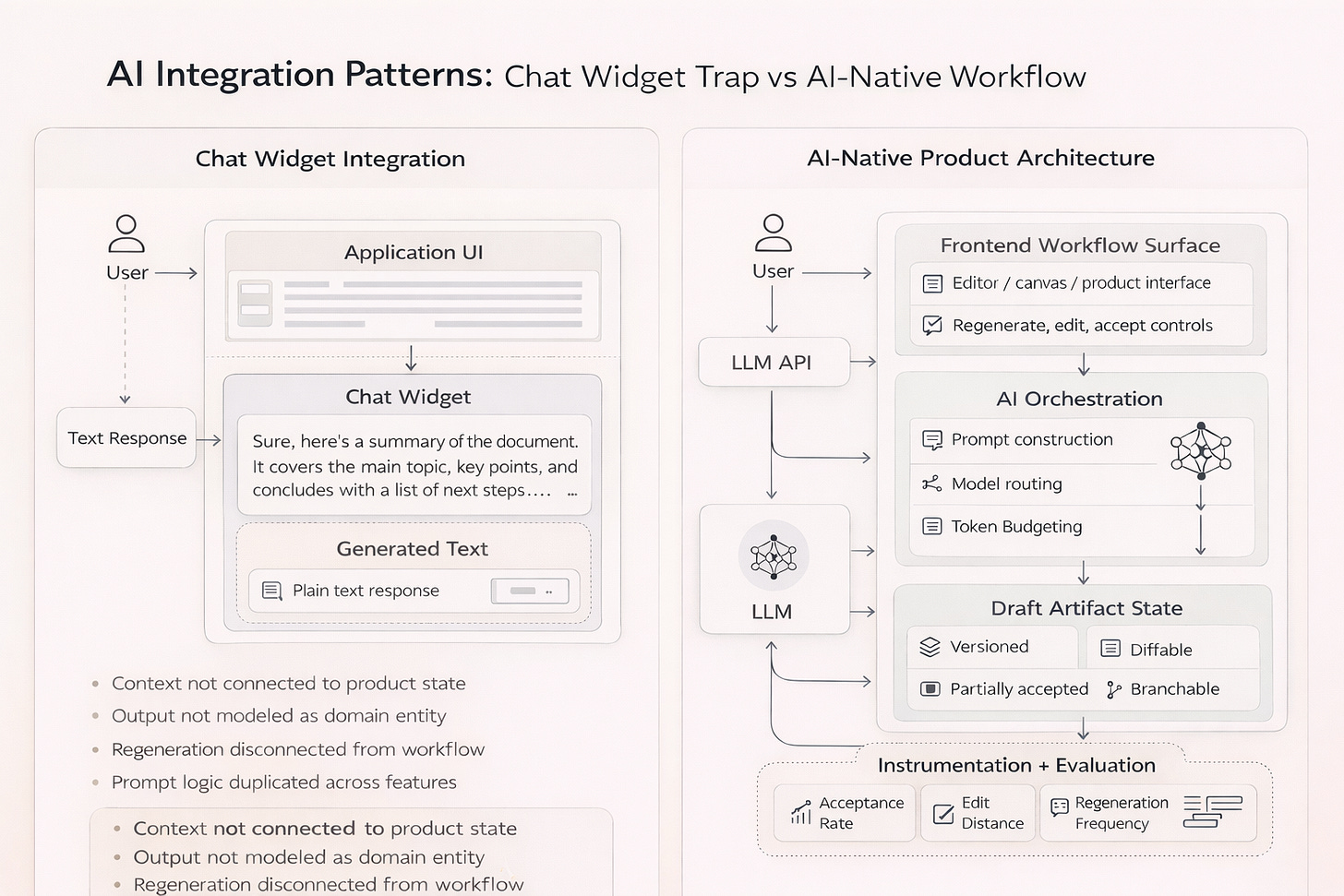

Treat AI output as draft state in LLM-powered applications

The most resilient systems treat model output as editable material, not a finalized and authoritative truth.

You can see this pattern clearly in tools like Notion AI, Cursor, or ChatGPT’s canvas-style interfaces. The model does not return a final answer that disappears into a chat log. Instead, the output appears inside an editable workspace where users can modify, accept, reject, or regenerate parts of it.

The AI behaves less like an answer engine and more like a collaborator working inside the artifact itself.

Versioning, partial acceptance, branching

In mature systems, generated content is:

Versioned

Comparable

Branchable

Partially accepted

Generated artifacts become domain entities, not transient responses.

This enables iteration without starting from scratch.

UI affordances that reveal the model (regeneration, diffs)

You can often detect architectural maturity through interface details:

Prominent regenerate controls

Diff views between versions

Inline editing with tracked changes

Structured refinement prompts

When regeneration is disconnected from state, the product feels fragile.

This shift, from temporary output to structured artifacts inside a system, is something I’m also exploring while building a small application around retrospective intelligence. I wrote more about that idea in From a Box to an Intelligence Layer.

Streaming is where probabilistic output meets deterministic UI

Latency behavior reveals architectural discipline.

Some systems block until completion. Others stream incrementally.

A simple example: imagine an AI writing assistant generating a long technical explanation. In a blocking system, you wait 12 seconds and receive a full wall of text. If it misses the direction, you restart.

In a streaming system, you see the structure forming. After the first paragraph, you can stop generation, adjust the instruction, and continue.

The perceived intelligence comes from interaction control, not raw speed.

You can observe this in tools like ChatGPT, Claude, or Cursor, where responses stream incrementally. Users often interrupt generation, adjust the prompt, or refine instructions before the response completes. The interface allows continuous control over the generation process.

Cancellation, retries, and reconciliation

Well-designed streaming systems:

Maintain explicit generation lifecycle states

Handle cancellation cleanly

Reconcile partial output deterministically

Avoid duplicating artifacts during retries

Common failure modes (duplicates, races, corrupted state)

Weaker systems reveal themselves through:

Duplicate entries after regeneration

Race conditions between parallel generations

Corrupted or partially persisted state

UI that desynchronizes from backend inference

Streaming makes architectural shortcuts visible quickly.

Operational discipline in AI systems: cost control and evaluation loops

Cost and quality control separate experimental systems from sustainable ones.

There are real trade-offs here. More detailed logging improves evaluation but increases privacy exposure. Richer context improves output quality but increases token cost. More streaming improves interaction control but adds state complexity.

None of these are free.

Token budgeting, caching, routing

In deliberate products, you can infer the presence of:

Token estimation before execution

Context compression strategies

Caching keyed by stable context fingerprints

Model routing based on task complexity

These systems rarely advertise cost control, but their constraints feel coherent instead of reactive.

Instrumentation: feedback, acceptance tracking, prompt metadata

Evaluation loops appear in products that:

Capture structured feedback

Track acceptance or rejection rates

Log prompt metadata for analysis

Measure regeneration frequency

Without instrumentation, degradation remains invisible until users disengage.

Reasoning boundaries and orchestration layers

Where reasoning lives becomes clear under pressure.

Consistency across surfaces

In fragmented systems, similar tasks behave differently across surfaces. Prompt logic diverges. Output quality varies.

In cohesive systems, reasoning is centralized or clearly layered. Context assembly patterns remain consistent.

Separating inference logic from presentation

Mature architectures decouple:

Inference orchestration

Prompt construction

Retrieval logic

Presentation components

When inference logic leaks directly into UI components, scaling and debugging become painful.

Common mistake in AI product design: the chat widget trap

A common failure mode is bolting a chat interface beside an existing workflow.

Many early AI integrations started this way: a floating chat assistant attached to an otherwise unchanged product. While useful for exploration, this pattern rarely integrates deeply with the system’s domain model.

Symptoms include:

Generated output not modeled as a domain entity

Context that does not persist meaningfully

Regeneration disconnected from artifact state

Prompt logic duplicated across features

This approach adds capability but does not reshape the workflow.

Trade-offs to name explicitly

AI-native architecture introduces real trade-offs that should be surfaced early:

Latency vs user control

Detailed logging vs privacy constraints

Persistent memory vs interface clutter

Richer context vs rising token cost

Centralized orchestration vs surface-level flexibility

Systems feel more coherent when these trade-offs are deliberate rather than accidental.

Practical example: turning “generate a summary” into a product workflow

Consider a simple feature: Generate a summary.

In a simple implementation:

Send document text to the model

Render the returned summary

In a product-oriented implementation:

Model the summary as a first-class entity linked to the document.

Assemble context from document content, user preferences, and formatting rules.

Stream the summary incrementally into a draft state.

Allow partial acceptance or inline edits.

Version each regeneration.

Log acceptance or rejection as evaluation signal.

The surface feature is identical. The architecture is not.

I’m exploring a similar architecture while building a small application that turns retrospective notes into structured intelligence. I wrote more about my idea in From a Box to an Intelligence Layer.

If you’re building AI features, this is the architecture checklist I’d use in a design review.

AI feature architecture checklist for LLM-powered frontend systems

1. Context Construction

Is context assembled deliberately from multiple state layers?

Is pruning or summarization explicit?

2. Output Modeling

Are generated artifacts versioned entities?

Can users iterate safely without losing prior work?

3. Streaming Resilience

Are lifecycle states explicit?

Do cancellation and retries reconcile deterministically?

4. Cost Discipline

Is token usage predictable and measurable?

Are caching and routing strategies defined?

5. Evaluation Loop

Is output quality measured in production?

Are corrections captured as structured signal?

6. Reasoning Boundaries

Is orchestration layered and decoupled from presentation?

Closing observation

The most interesting shift is not that AI appears in more products.

It is that the frontend increasingly shapes how reasoning happens.

Rendering is no longer the only concern. Frontend systems now participate in context orchestration, probabilistic state handling, cost control, and evaluation.

When these concerns are treated intentionally, products feel calm and coherent. When they are incidental, the gap shows.

Frontend systems increasingly shape how models reason by controlling context, state, and interaction loops.

AI-native architecture is less about model sophistication and more about systems discipline across state management, context orchestration, and evaluation.

That discipline is quickly becoming part of modern frontend engineering.

Until next time,

Stefania

Articles from the ♻️ Knowledge seeks community 🫶 collection: https://stefsdevnotes.substack.com/t/knowledgeseekscommunity

Articles from the ✨ Dev Shorts collection:

https://stefsdevnotes.substack.com/t/frontendshorts

Articles from 🚀 The Future of API Design series:

https://stefsdevnotes.substack.com/t/futureofapidesign

👋 Get in touch

Feel free to reach out to me, here, on Substack or on LinkedIn.

the chat widget trap scales up too. In retail AI I keep seeing PoCs built around a floating assistant - isolated, clean, impressive in a demo.

Then production needs context from inventory systems, pricing rules, order history. The widget was never built for any of that. The architecture conversation happened after the demo, not before.